f<a+i>r Southeast Asia Hub

The Feminist AI Hackathon was successfully held from March 7-8, 2023 at Mandarin Hotel, Bangkok.

The Hackathon is part of the activities of the Southeast Asian Hub of the “Incubating Feminist AI Project,” funded by the IDRC, Canada.

There were eight teams in the competition coming from all age groups, ranging from Grade 11 students from many schools to working age people from government organizations as well as general members of the public, all of whom were interested in how to create AI systems that contributes to an equal and quality society.

The Hackathon started on March 7 with Dr. Soraj speaking for about fifteen minutes detailing the rules of the Hackathon as well as its objectives. After that each team went to various places within the hotel to brainstorm and to create their presentation.

The objectives of the Hackathon were to suggest ideas in which AI could be used to create an equal society, especially on gender issues. Since there was not enough time to develop anything substantial, the idea was to come up with ideas which could lead to effective apps or policy innovations that utilized the power of AI.

The eight teams in the Hackathon were as follows:

The first team consisted of five high school students from Chonburi. They presented an idea for an app called LUNCH, which was designed to help sex workers by sharing information and sending out SOS messages in case they were in danger.

The second team was from the Faculty of Engineering, Chulalongkorn University. These undergrads came up with an app designed to modify speaking voices on telephone answering systems. The students said that biases could happen when a caller called a company and the answering system replied with a kind of voice that the caller did not like or which the caller had biases against.

The next team came from many different high schools from provinces outside of Bangkok. An interesting idea presented by this team was that their app was designed to search for keywords that created inequality or biases in conversation. This was to create a smooth online experience free from gender biases.

The fourth team conisted also of high school students from a well-known school in Bangkok. They came up with an app called “Law4All” which worked as a chatbot which functioned as a law consultant especially for women, who are often disadvantaged when it comes to access to legal services. The app offered legal advice and relevant information about the law that women needed to know. The team also did some actual coding and presented an early prototype of the app to the audience.

The next team consisted of only two members, who were middled age gentlemen who were authentic amateurs but who were interested in thinking about AI. Their app, called “Uan AI”, from the name of one of the members of team, was designed to create a caring community on issues related to gender equality. They proposed that search engines labelled the results in color-coded categories. Green labelled results meant it was ok and safe; yellow meant use some caution, and red meant strictly no-no.

The sixth team also consisted of adult members, but they were from a government organization specializing in geoinformatics. They presented an app called “Kaifeng Platform,” from the city of Kaifent in ancient Song Dynasty in China, which was the place where the renowned judge Bao Zheng, also known as Bao Qing Tian, a Chinese bureaucrat who was revered for his justice. The app aimed at using natural language processing to search for inequalities in the letters of the Thai law so that appropriate action could be performed later.

The next team also came from the Faculty of Engineering Chulalongkorn University, but this team consisted of graduate students. They presented an app, called “Friend of Teen Mons,” designed to help teenage girls who became pregnant against their will. The app was a chatbot and provided advice to the girls as well as needed information, such as where they could go to get safe abortion.

The last team consisted only of two members. One was a recent graduate from the Faculty of Psychology, Chulalongkorn University, and the other was a Thai high school student who was studying in the US. Their app was called “Eudaimonium” from Greek eudaimonia, meaning flourishing or happiness. The problem that this app aims to solve is the problem many have faced when communicating on social media. That is, they have problems presenting themselves to the outside world. Apps such as Tinder, for example, usually presents profile pictures which are often inauthentic, and in some other apps the user also faces the problem of how to present themselves. What this app does is to provide a social media platform which lets the user communicate with their friend freely and without becoming self-conscious.

It can be seen that each of the teams presented very interesting ideas, and many of these ideas could be developed into useful and innovative apps.

The result of the Hackathon was as follows:

*First Prize: Team Eight “Eudaimonium”.

*Second Prize: Team Four: “Law4All”

*Third Prize: Team Six “Kaifeng Platform” and Team Seven “Friend of Teen Moms”

Each team was given prize money and certificates.

The organizing committee would like to thank the members of each team for participating in the Hackathon, and look forward to further activities on Feminist AI in the near future.

Soraj Hongladarom is Professor of Philosophy and Director of the Center for Science, Technology, and Society at Chulalongkorn University. His areas of research include applied ethics, philosophy of technology, and non-western perspectives on the ethics of science and technology

Supavadee Aramvith is Associate Professor of Electrical Engineering and Head, Multimedia Data Analytics and Processing Research Unit, Chulalongkorn University. Her areas of research include video signal processing, AI based video analytics, and multimedia communication technology. She is very active in the international arena with the leadership positions in the international network such as JICA Project for AUN/SEED-Net, and the professional organizations such as IEEE, IEICE, APSIPA, and ITU

Siraprapa Chavanayarn is an Associate Professor of Philosophy and a Member of the Center for Science, Technology, and Society at Chulalongkorn University. Her areas of research include epistemology, especially social epistemology, and virtue epistemology.

Speakers: Proadpran Punyabukkana and Naruemon Prananwanich

October 11, 2021, 3.30 – 5.00pm, Thailand Time

The first network meeting of the Southeast Asian Hub of the Incubating Feminist AI Project was held on Monday, October 11, 2021, from 3.3opm to 5 pm on Zoom. The main speakers were Proadpran Bunyapukkana and Naruemon Prataranwanich. Proadpran is Associate Professor of Computer Engineering at the Faculty of Engineering, Chulalongkorn University, and Naruemon is Assistant Professor of Computer Science at the Department of Mathematics and Computer Science, Faculty of Science, also from Chulalongkorn. The purpose of the meeting was to introduce the Feminist AI Project to the public in Southeast Asia and to talk about general issues concerning gender biases and other forms of gender inequality in AI in general. Around 15 people attended. Proadpran and Naruemon, who are former students of Proadpran’s, talked about their works, which included assistive technology for the elderly and other forms of technology that were designed to help those who are disabled. She also talked about the number of women working in technical fields in the Global South. On her part, Naruemon talked about the need for computer scientists to learn more about their social environment and about the need for AI to be free from biases, which come from the data that are fed into the algorithm. The talk ended with an announcement for the call for papers for the Feminist AI Project and questions from the audience.

Key Issues discussed

Key recommendations for action

Junuary 31, 2022

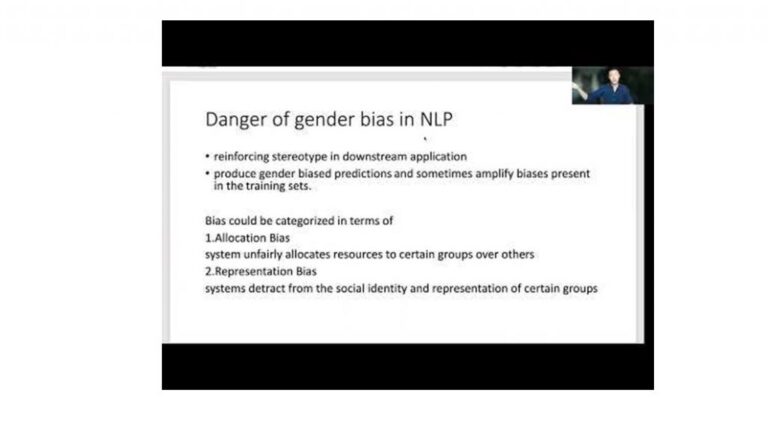

The second network meeting of the South-east Asia Hub of the Incubating Feminist AI Project was held on January 31, 2022 from 4 to 5.30 pm Thailand time, also via Zoom. A larger audience attended the event when compared to the first one in October. The total of more than 50 people registered for the event and around 35 actually attended. The meeting was led by Associate Professor Attapol Rutherford from the Department of Linguistics, Faculty of Arts, Chulalongkorn University. Attapol is an example of the new generation of scholars who go right across disciplinary boundaries. He was educated as a computer scientist, having graduated with a Ph.D. in computer science from Brandeis University, but he is now working as a linguist at the Faculty of Arts, Chulalongkorn University, a traditional bastion of humanistic studies in Thailand. The topic of his talk was “Gender Bias in AI.” He presented a very clear account of the current research on gender bias in AI, giving examples from a wide variety of languages, such as Hungarian, English, Chinese, and Thai. The key idea in his talk is that in analyzing natural languages, the AI algorithm, working on data fed to them obtained from real-life usage, tends to mirror the biases that are already present in the data themselves. His talk is very useful for those who would like to start their research in the field, and he points out works that need to be done in order to combat the gender problem in AI. He suggested a way to ‘de-bias’ AI through a variety of means. Basically, this involves constant input and monitoring of how AI does its job.

Key Issues discussed

Key recommendations for action

AI can, and should, be made more gender friendly through both technical means

Background of the event

The second network meeting of the South-east Asia Hub of the Incubating Feminist AI Project was held on January 31, 2022 from 4 to 5.30 pm Thailand time, also via Zoom. A larger audience attended the event when compared to the first one in October. The total of more than 50 people registered for the event and around 35 actually attended. The meeting was led by Associate Professor Attapol Rutherford from the Department of Linguistics, Faculty of Arts, Chulalongkorn University. Attapol is an example of the new generation of scholars who go right across disciplinary boundaries. He was educated as a computer scientist, having graduated with a Ph.D. in computer science from Brandeis University, but he is now working as a linguist at the Faculty of Arts, Chulalongkorn University, a traditional bastion of humanistic studies in Thailand. The topic of his talk was “Gender Bias in AI.” He presented a very clear account of the current research on gender bias in AI, giving examples from a wide variety of languages, such as Hungarian, English, Chinese, and Thai. The key idea in his talk is that in analyzing natural languages, the AI algorithm, working on data fed to them obtained from real-life usage, tends to mirror the biases that are already present in the data themselves. His talk is very useful for those who would like to start their research in the field, and he points out works that need to be done in order to combat the gender problem in AI. He suggested a way to ‘de-bias’ AI through a variety of means. Basically, this involves constant input and monitoring of how AI does its job.

June 6, 2022, 3.30 – 5.00pm, Thailand Time

Background on the event

Our fourth Network Meeting took place on June 6, 2022, after a rescheduling. The talk was led by Jun-E Tan from Malaysia. Jun-E is a scholar and a policy researcher, and has been involved in the issue of AI governance, especially in Southeast Asia, which is the topic of her talk in this Network Meeting. Dr. Tan opened by talking about what AI was and what were the security risks that were created by the technology. The risks were divided into four categories, namely digital/physical, political, economic, and social ones. Examples of the first category are the potential that AI could cause physical harm or attack. Political risks include disinformation and surveillance; economic risks include the widening gap between the rich and the poor, and social risks include threats to privacy and human rights. These were only some of the risks that Dr. Tan mentioned. Then she talked about how these risks could be mitigated through a system of governance. This included rapid responses by the government, adaptation of international norms such as the GDPR with some degree of localization. She also presented some of the challenges that Southeast Asian governments needed to face, such as the fact that Southeast Asian governments and the region in general did not have a strong voice in the international arena. There were also existing and ingrained challenges such as lack of technical expertise, authoritarian regimes and weak institutional frameworks. After her talk there was a lively discussion among the audience, which included how the system of governance here could promote the use of AI in such a way that creates a more gender-equal society.

Key Issues discussed

● Physical, political, economic, and social risks of AI

● Challenges facing Southeast Asian governments

Key recommendations for action

● Anchor AI governance in its societal contexts

● Build constitutionality around AI and data governance

● Enable whole-of-society participation in AI governance

Background on the event

In this fifth session of the Asia Network Meeting, which is the last one for the first year of the Project, Eleonore Fournier-Tombs and Matthew Dailey talked about “Gender-Sensitive AI Policy in Southeast Asia.” Fournier-Tombs is a global affairs researcher specializing in technology, gender, and international organizations. Matthew Dailey is a professor of computer science at the Asian Institute of Technology. Fournier-Tombs started by pointing out various risks for women that are posed by AI, such as loan apps giving out more money to men than to women, job applications from women being downgraded by AI, and so on. She also talked about stereotyping through the use of language, as well as some of the socio-economic impacts this stereotyping and discrimination has caused. Then she talked about the project that she and her colleague Matthew Dailey were undertaking, where they looked at the AI situation in four countries in Southeast Asia, namely Malaysia, Philippines, Thailand, and Indonesia. They found out that all four countries had their own respective AI roadmap policies, but only Thailand had a fully functioning official AI ethics policy guideline. Toward the end of her talk she discussed how the instrument of the Universal Declaration on Human Rights became translated to working documents on AI policy, especially in the region. After Fournier-Tombs’ talk, Matthew Dailey followed on with his discussion of the projects that he was working with Fournier-Tombs, and that he was working with his students. The latter consisted of projects that implemented AI technology to various uses in Thailand, such as in facial recognition and in regulating the unruly Thai urban traffic. There was a lively discussion among the audience at the end.

Key Issues discussed AI policies in Southeast Asian countriesHow women are impacted by AI and what instruments are there to mitigate the impactHow four countries in Southeast Asia responded to the AI challengeKey recommendations for action

More study of how the global mechanism such as the Universal Declaration on Human Rights becomes operative in the fields of AI policy

Research and development on gender-sensitive AI

The 5th Network Meeting

Background on the event

Stephanie Dimatulac Santos is a professor at the Metropolitan State University of Denver. She is right now conducting a research project on digital labor in affiliation with the Center for Science, Technology, and Society at Chulalongkorn University from January to June, 2023. under the auspice of the Fulbright Fellowship Grant. Her talk in the sixth Asia Network Meeting is entitled “Labors of Artificial Artificial Intelligence,” and it looks at the situation of the digital laborers who toil behind the scene, making it possible for giant multinational companies to employ the full power of AI in their services to their users. She talks about the plight of such laborers in the Philippines. Since Filipinos are mostly fluent in English, their employment in the AI industry is ideal as a kind of laborers who supply the AI systems with the human power that provides quality control for these systems. Furthermore, it is the women that enter this workforce more than the men, resulting in another layer of complexity where women are being exploited in this industry. Dr. Santos also talks about how these laborers are exploited, creating a situation that is rather similar with that of the manual workers in the older industries. Thus, even though the type of technology has changed, the plight and the exploitation of the workers (in this case digital workers) appear to remain the same.

Key Issues Discussed

• Digita laborers

• Women in digital labor

• Need for a hard look on the situation of manual (digitally and intellectually) workers in the AI industry

Key Recommendations for Action

• The rights of these women digital workers need to be respected

• Clear policy on how to provide fair compensation and welfare to these workers

• We need to pay attention to what is happening behind the scene in the AI industry

Background on the event

The winning team of the Feminist AI Hackathon, which was held from March 7-8, 2023 in Bangkok, Thailand came to talk about their works at the seventh ASIA Network Meeting on May 16, 2023 from 7 to 8 pm on Zoom. The team consists of Suppanat Sakprasert, a recent bachelor’s degree graduate in psychology from Chulalongkorn University, and Parima Choopungart, a high school student from the US. They talked about the project, Eudaimonium, which was an idea for an app where men and women can get together as friends without the baggage that makes women especially feel vulnerable or pressured. Relying on Suppanat’s psychology background, the app is designed to lessen the psychological images that usually accompany self introduction and profiling in social media apps. In the app, the user retains total control of how they appear on the app, making it less likely for biases and judgmental attitudes to arise. Users can then reveal more of themselves when they gain trust with their selected friends.

Key Issues discussed

Psychological harm arising from engaging in social media

How to gain trust on social media

Here is a post by Suppanat about their work

The co-leader of the Southeast Asia Hub of the Feminist AI Project, Supavadee Aramvith from the Faculty of Engineering, Chulalongkorn University, talked in the Eighth ASIA Network Meeting on “Inclusive AI Design.” The talk was held on Zoom on September 18, 2023, from 8 to 9.30 pm. In the talk, Supavadee focused on the more technical aspects of AI design. She introduced the concepts of artificial intelligence and machine learning for those of us who might not already have adequate background knowledge on the topics. Then she talked about how to design AI so as to be inclusive. For her, “inclusive AI” refers to the AI system build around algorithms designed to be fair and unbiased towards all individuals and groups, regardless of their race, gender, age, religion, and other personal characteristics. The goal of Al inclusive algorithms is to ensure that the decisions and outcomes produced by the algorithm do not result in discrimination or inequality.

Key Issues discussed

Basic understanding of AI and machine learning

Details about Inclusive AI and Design

EVENTS SUMMARY

Background on the event

The Ninth ASIA Network Meeting of the Southeast Asia hub of the “Incubating Feminist AI” Project will feature a talk by Stephanie Santos on “The Gig Economy in Southeast Asia” on December 7, 2023, at 9 pm Bangkok time (GMT +7). In this talk, Stephanie Santos draws from the cultural production and situated testimonies of food delivery riders, to examine Southeast Asian gig economies through the life-making practices of its most vulnerable workers. She starts out by giving an account of the gig economy as one characterized by independent contractors and short term commitments, where the action is pervasively mediated by the use of internet platforms. Then she gives an overview of the numbers of gig workers in various countries in Southeast Asia, where Thailand comes out highest with 2.26 billion US dollars in terms of the size of the economy, and Indonesia is top with the highest number of workers at 2.3 million. Then she presents a series of interviews that she has conducted with the gig workers throughout the region, many of whom are women. What ties them together is that these workers lack the kind of security that comes with being a traditional worker. Furthermore, women workers suffer doubly when there are more cancellations of the services performed by women workers, and women workers suffer from various kinds of abuses. During the discussion session there was a talk about how to solve the situation and there was a proposal that the legal mechanisms in these countries needed to be strengthened.

Key Issues discussed

• The situation of gig workers in Southeast Asia as well as the roles that women workers are playing

• Proposals to ameliorate the situation, including, but not limited to, legal mechanisms

On May 20, 2022, the Southeast Asian Hub of the “Incubating Feminist AI Project” launched its first capacity building workshop, entitled “Feminist AI and AI Ethics” at the Royal River Hotel in Bangkok. The workshop is part of the series of activities organized by the f<A+i>r network, a group of scholars and activists who join together to think about how AI could contribute to a more equal and inclusive society. The Project is supported by a grant from the International Research Development Centre, Canada.

The event was attended by around twenty participants from various disciplines and backgrounds. The aim of the workshop was to equip participants with the basic vocabulary and conceptual tools for thinking about the roles that AI could play in engendering a more inclusive society.

The workshop was opened by Suradech Chotiudomphant, Dean of the Faculty of Arts, Chulalongkorn University. Dr. Jittat Fakcharoenphol and Dr. Supavadee Aramvit were also presented at the Workshop. Jittat was the lead discussant and would take a key role in the group discussion, and Supavadee was a member of the Southeast Asia Hub of the Project. Then Dr. Soraj Hongladarom, Director of the Center for Science, Technology, and Society, presented a talk on “Why Do We Need to Talk about Feminist Issues in AI?” After presenting a brief definition and history of AI, Soraj talked about the reasons why we needed to consider feminist issues in AI, as well as other issues concerning social equality. Basically, the reasons are that gender equality is essential for the economic development of a nation. A nation where both women and men are given the same opportunities and equal rights will be more likely to create prosperity that will benefit everyone, especially when compared with a society that does not give women equal rights and opportunities. Furthermore, there is also a moral reason: Denying women their rights would be wrong because inequality itself is morally wrong. Then he talked about the various ways in which AI had actually been used, either intentionally or not, in such a way that the rights of women were violated. For example, AI has been used to calculate the likelihood of repaying loans. If the dataset is such that women are perceived by the algorithm as being less likely to repay, then there is a bias in the algorithm against women, something that needs to be corrected. Toward the end, Soraj mentioned that the Incubating Feminist AI project was currently launching a call for expressions of interest, where everyone was invited to submit. Details of the call can be found here.

Afterwards, the actual workshop began, with a lead talk and discussion by Dr. Jittat Fakcharoenphol from the Department of Computer Engineering, Faculty of Engineering, Kasetsart University. Jittat talked about the basic concepts in machine learning, the core matter of today’s AI, and then he presented the group with three cases for them to discuss, all of which were concerned with feminist issues in various applications of AI. These were feminist issues in AI in medicine, in facial recognition, and in loan and hiring algorithms. The participants divided themselves into three groups; they then chose a topic and started to have their discussion very actively. After about an hour of group discussion, each group presented to others what they had discussed and what their recommendations were. The participants showed a strong interest in the topics and everyone was convinced that AI needed to become more socially aware and that more work needed to be done to see in detail what exactly socially aware AI is going to be.

At the end of the meeting, Dr. Supavadee talked about her reflection on the Workshop and gave a closing speech. The workshop in fact was the first one, to my knowledge, that was engaged with feminist topics in AI, and it was a credit to the IDRC and the Incubating Feminist AI project that a seed was planted in Thailand and in Southeast Asia regarding the awareness that we must consider how AI can contribute to a more equal and more inclusive society, and how the traditional unequal status of women, especially in this part of the world, could be redressed through this technology.

Network meetings

Capacity Building Workshop

Collection of ‘Think Pieces’ or ‘Essays’ on Feminist AI